Executive Summary

Many organisations are experimenting with Generative AI — but very few are truly ready to scale it securely and compliantly. In UK-regulated industries such as financial services, energy, healthcare, and retail, AI maturity is no longer optional. Boards, regulators, and risk committees increasingly expect demonstrable control, governance, and measurable value from AI initiatives.

An AI maturity model provides structured evaluation across strategy, governance, data, technology, and people — enabling organisations to move from experimentation to enterprise capability.

This article introduces a practical scoring framework and explains how Surabhi Consulting supports regulated organisations in measuring and accelerating AI maturity safely.

Why AI Maturity Matters in 2026

AI maturity determines whether your organisation can:

- Deploy AI securely in cloud environments

- Manage regulatory and data protection exposure

- Scale AI beyond pilot programmes

- Measure ROI accurately

- Maintain audit-ready documentation

Without a structured maturity assessment, organisations risk:

- Shadow AI usage and uncontrolled experimentation

- Compliance gaps and audit findings

- Data leakage exposure

- Uncontrolled scaling and operational disruption

- Reputational damage

AI maturity is not about how many tools you use — it is about how well AI is governed and embedded into enterprise controls.

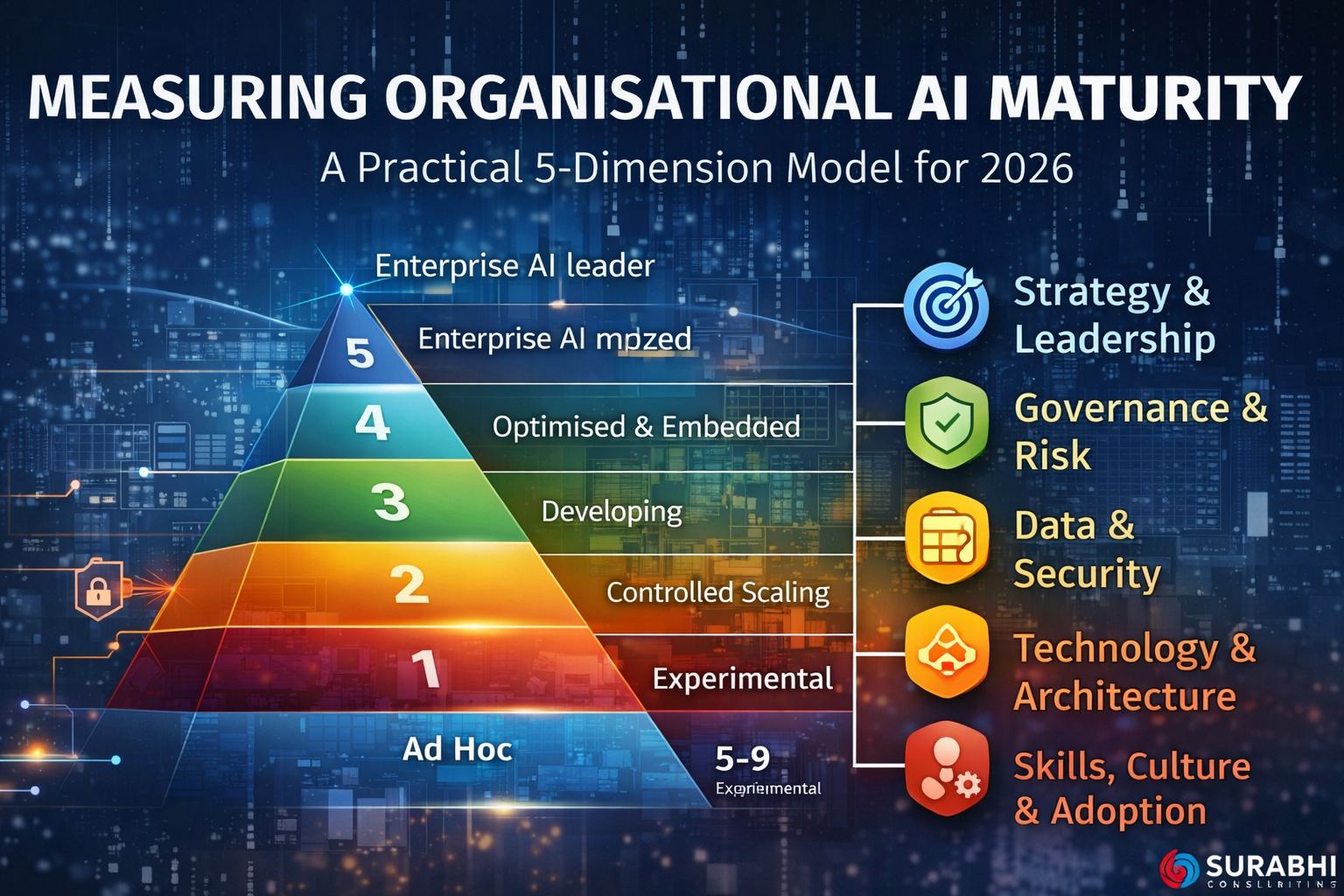

The 5-Dimension AI Maturity Scoring Model

This practical framework evaluates organisations across five dimensions. Each can be scored from Level 1 (Ad Hoc) to Level 5 (Optimised & Embedded).

1) Strategy & Leadership

Key questions:

- Is there a board-approved AI strategy?

- Is AI risk owned at the executive level?

- Are AI objectives aligned with business outcomes?

- Is ROI clearly defined?

| Level | Description |

|---|---|

| 1 | Isolated AI experiments with no strategy |

| 2 | Informal AI roadmap, limited executive oversight |

| 3 | Defined AI strategy aligned to business goals |

| 4 | Board-level governance and measurable KPIs |

| 5 | AI embedded into enterprise strategic planning |

2) Governance & Risk Management

Key questions:

- Do you maintain an AI risk register or RAID log?

- Is there an AI usage policy and governance structure?

- Are model validation and approval workflows documented?

- Is there human-in-the-loop oversight for high-risk outputs?

| Level | Description |

|---|---|

| 1 | No formal governance |

| 2 | Basic policy documentation exists but is not embedded |

| 3 | Risk categorisation framework implemented and used |

| 4 | Audit-ready documentation, monitoring and reporting |

| 5 | Continuous governance optimisation with board reporting |

3) Data & Security Readiness

Key questions:

- Is enterprise data classified and tagged?

- Are DLP controls integrated into AI workflows?

- Is sensitive data masked before AI processing?

- Are cloud AI endpoints secured with private networking and RBAC?

| Level | Description |

|---|---|

| 1 | Unstructured data usage with minimal controls |

| 2 | Basic data controls inconsistently applied |

| 3 | Data classification enforced and aligned with access rules |

| 4 | Secure AI cloud architecture deployed with monitoring |

| 5 | Continuous security optimisation and proactive risk mitigation |

4) Technology & Architecture

Key questions:

- Is AI integrated with enterprise systems (ERP, CRM, service management)?

- Are DevSecOps and MLOps implemented for AI deployments?

- Is prompt version control and approval workflow in place?

- Is model drift monitored and managed?

| Level | Description |

|---|---|

| 1 | Isolated pilots with limited engineering discipline |

| 2 | Basic cloud deployment without strong control layers |

| 3 | Secure API and access controls implemented |

| 4 | Integrated MLOps with monitoring and governance workflows |

| 5 | Fully optimised, scalable, and cost-controlled architecture |

5) Skills, Culture & Adoption

Key questions:

- Are employees trained in responsible AI usage?

- Is there a clear AI ownership model and operating rhythm?

- Are change management strategies in place?

- Is adoption measured and improved over time?

| Level | Description |

|---|---|

| 1 | No structured AI training or adoption plan |

| 2 | Limited awareness sessions and informal guidance |

| 3 | Defined training programme and role-based enablement |

| 4 | Responsible AI practices embedded into day-to-day culture |

| 5 | Continuous capability development with measurable outcomes |

How to Calculate Your AI Maturity Score

Score each dimension from 1 to 5, then total the results.

| Dimension | Example Score |

|---|---|

| Strategy & Leadership | 3 |

| Governance & Risk | 2 |

| Data & Security | 3 |

| Technology & Architecture | 4 |

| Skills, Culture & Adoption | 2 |

Total Score: 14 / 25

Maturity Bands:

- 5–9: Experimental Stage

- 10–15: Developing Stage

- 16–20: Controlled Scaling Stage

- 21–25: Enterprise AI Leader

This scoring helps prioritise investments and focus resources where risk reduction and value creation are most needed.

Common Findings in UK Regulated Organisations

- Strong pilots but weak governance documentation

- Inconsistent data classification and limited DLP coverage

- Limited ROI tracking and unclear success metrics

- Undefined board oversight and inconsistent ownership

- Adoption challenges due to insufficient training

From Maturity Assessment to Action Plan

An effective AI maturity assessment should produce:

- Governance gap analysis and remediation plan

- Data risk heatmap

- Secure cloud AI architecture review

- ROI measurement framework

- 6–18 month AI roadmap

- Board-level reporting dashboard

How Surabhi Consulting Supports AI Maturity Acceleration

We support clients with:

- AI Maturity Assessment Workshops

- Governance & Risk Framework Design

- Secure AI Architecture Review

- ROI & Business Case Development

- Enterprise AI Roadmap & Board Reporting

Ready to assess your AI maturity?

Contact Surabhi Consulting to schedule an AI Maturity Assessment Workshop.

Conclusion

In 2026, organisational AI maturity is becoming a competitive and regulatory differentiator. Organisations that measure maturity honestly, close governance gaps early, secure cloud architecture properly, and align AI to strategic outcomes will scale safely and confidently.

Those who ignore maturity risk regulatory intervention, operational disruption, and reputational harm.

Very well

test